On Friday, May 3rd I attended the ‘Social Media Platforms between Private, Public and Commercial Space’ panel where Tarleton Gillespie talked about Curation by Algorithm. Based on his chapter ‘The Relevance of Algorithms’ [pdf] in the forthcoming book Media Technologies Gillespie posed a few questions:

1. What do these algorithms do?

Algorithms are part of the broader ‘content moderation’ picture where devices, search engines and algorithms help us sift through an enormous amount of content, for example by implementing recommendation algorithms. They also help present a carefully managed experience for first users by constructing a welcoming environment filled with (introduction) content or by guiding users to navigate them through the interface.

2. How are these algorithms understood?

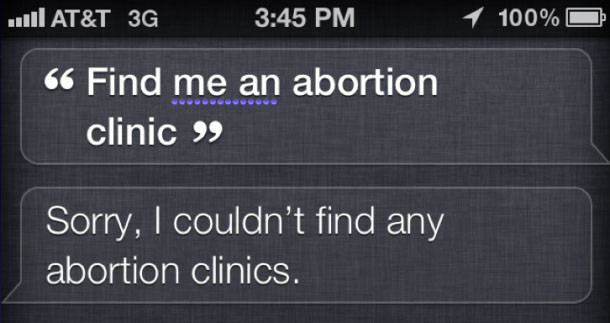

Algorithms are often discussed in terms of filtering or censoring content, as put forward in The Filter Bubble, but to what extent do algorithms act on or are entangled in practices of censorship by other means? Gillespie shows the example of Apple’s voice search Siri which couldn’t find any abortion clinics when searching for them. While Apple quickly responded that this was a ‘glitch’ and not an intentional omission it caused a ‘Siri is pro-life’ controversy.

However, what actually happened is that Siri is a voice interface on top of search engines and it turns out that pro-life organizations are better optimized for SEO. When Siri showed no results for [abortion clinic] or showed pro-life results it wasn’t because Apple is censoring pro-abortion results but because “Planned Parenthood doesn’t call itself an abortion clinic” and because pro-life organizations use the term abortion more (see Search Engine Land on Why Siri Can’t Find Abortion Clinics & How It’s Not An Apple Conspiracy). Thus, these results weren’t censored or ‘algorithmically demoted’ (Gillespie) but didn’t appear due to SEO strategies or a lack thereof. Algorithms are blurring the line between of our understanding between removing and obscuring results.

The example of Apple’s denial of a ‘pro-life Siri’ shows how “the providers of information algorithms must assert that their algorithm is impartial. The performance of algorithmic objectivity has become fundamental to the maintenance of these tools as legitimate brokers of relevant knowledge.” (Gillespie 2013)

3. Who do they seem to belong to?

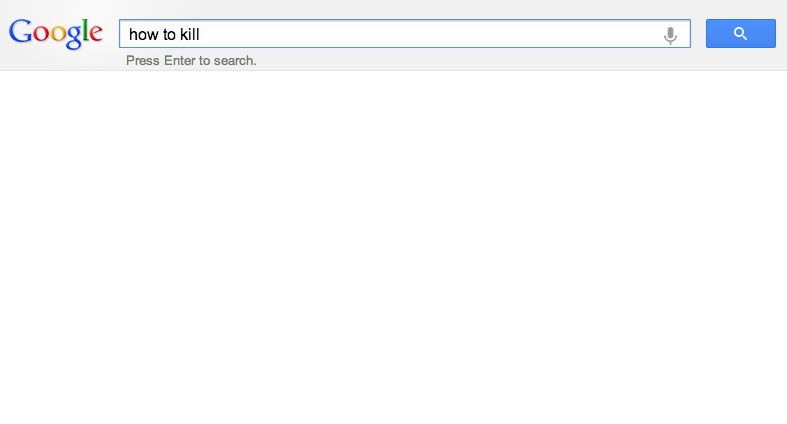

In the case of recommendation algorithms such as Google Instant’s auto-complete algorithm it can seem like the site is speaking to us. The query [how to kill] doesn’t show any results, or rather, any algorithmic recommendations, but it does seem to deliver Google a voice. Algorithmic results are conflated with the voice of Google.

4. What are the fraught politics of algorithms?

Such examples show the politics of algorithms, the larger (web) practices they may be entangled in and how users read and act on algorithmic ‘behavior’ in a particular way.

5. How do they create “calculated publics?”

Algorithms may have particular consequences which Gillespie explains through the production of “calculated publics: how the algorithmic presentation of publics back to themselves shape a public’s sense of itself, and who is best positioned to benefit from that knowledge.” Building on the notion of ‘networked publics’ where publics are formed through social media, algorithms may shape and form publics too. Common critiques on the formation of publics within social media are related the daily me or filter bubble, whether or not we receive enough diverse perspectives through personalization. While not necessarily disagreeing with these critiques, Gillespie argues that there is also something else happening within networked publics where algorithms play a role in the constitution of publics. Not only do algorithms play a role in the filtering and displaying of content to inform publics but they also algorithmically shape publics:

But algorithms not only structure our interactions with others as members of networked publics; algorithms also traffic in calculated publics that they themselves produce. When Amazon recommends a book that “customers like you” bought, it is invoking and claiming to know a public with which we are invited to feel an affinity -though the population on which it bases these recommendations is not transparent, and is certainly not coterminous with its entire customer base. (Gillespie 2013)

While users may target a specific group of friends through their configuration of privacy settings (friends of friends on Facebook) or through the use of platform-specific features to target content at a specific group (hashtags on Twitter and Instagram), the social media platforms themselves construct publics through the use of an opaque algorithm and therewith the “friction between the ‘networked publics’ forged by users and the ‘calculated publics’ offered by algorithms further complicates the dynamics of networked sociality.” (Gillespie 2013)